In this blog post, we are going to learn how to enable Semisynchronous Replication in MariaDB.

MariaDB provides semisynchronous replication option besides the standard MariaDB asynchronous replication.

In Asynchronous replication, the Master does not wait for a slave to confirm that an event has been received whenever the slave request events from the Master’s binary log.

Semisynchronous Replication

The procedure to enable Semisynchronous Replication below applies only to MariaDB 10.3.3 and later since it is already built into MariaDB server and is no long provided by a plugin.

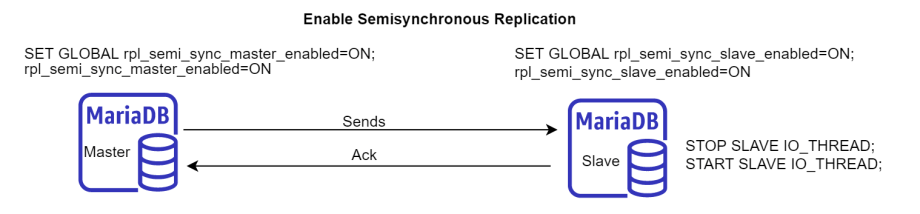

It can be set dynamically with SET GLOBAL.

SET GLOBAL rpl_semi_sync_master_enabled=ON;

It can also be set in my.cnf

[mariadb] ... rpl_semi_sync_master_enabled=ON

To enable Semisynchronous Replication on the Slave dynamically with SET GLOBAL.

SET GLOBAL rpl_semi_sync_slave_enabled=ON;

It can also be set in my.cnf

[mariadb] ... rpl_semi_sync_slave_enabled=ON

If slave threads are already running when you enable Semisynchronous Replication, you need to restart slave I/O thread. Otherwise, it will continue to use asynchronous replication.

STOP SLAVE IO_THREAD; START SLAVE IO_THREAD;

Configuring the Master Timeout

In semisynchronous replication, the slave acknowledges the receipt of the transaction’s events only after the events have been written to the relay log and flushed.

A timeout will occur if the slave does not acknowledge the transaction before a certain amount time, and the Master will be switching to Asynchronous replication.

Rpl_semi_sync_master_status status variable will be switched to OFF when this happens.

Semisynchronous replication will be resumed when at least one semisynchronous slave catches up, and at the same time Rpl_semi_sync_master_status status variable will be switched to ON.

The timeout period is configurable. It can be changed by setting the rpl_semi_sync_master_timeout system variable.

It can be set dynamically with SET GLOBAL.

SET GLOBAL rpl_semi_sync_master_timeout=20000;

It can also be set in my.cnf

[mariadb] ... rpl_semi_sync_master_timeout=20000

Cheers!